As a computer science or IT student, learning how to use Kafka and studying its relevance to different businesses and technologies will be essential to prepare you for your future career. However, when it comes to advanced software within the data science space, it can be tricky to understand and even more difficult to master. As businesses start to prioritize event-driven data streams, tools like Kafka that enable you to organize, aggregate, and analyze them are only going to become more widely adopted. The existence of Kafka Connect, which enables Kafka to integrate with external systems, makes it even more likely you’ll need to use it in the future. If you’re interested in taking your knowledge of Kafka to the next level, read on to learn more about how it works and who can benefit from Apache Kafka software.

Table of Contents

What is Apache Kafka?

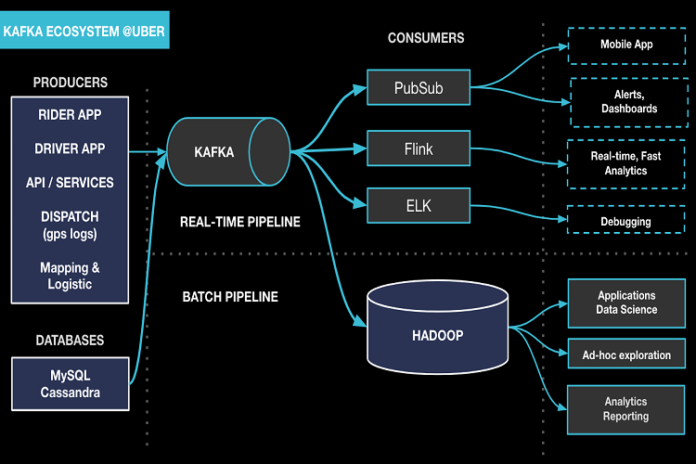

Apache Kafka can be used in a number of ways, but it is primarily a streaming engine that can collect, cache, and process large amounts of data in real time. Kafka is open-source and written in Scala and Java and was developed by the Apache Software Foundation. It can handle functions like distributed streaming, pipelining, and replay of data feeds. Kafka is known as a broker-based solution, which references the fact that it maintains its streams of data within a cluster of servers.

Kafka’s servers can span multiple data centers while still providing data persistence due to the fact that they store streams of records across multiple instances using topics. A topic can be published, subscribed to, and used by the application to demonstrate interest in a particular stream of data. Topics can be divided further into partitions, which are appended on an ongoing basis in order to create a sequenced commit log.

Who does Apache Kafka software benefit?

Now that you understand the basics, let’s talk about who can best make use of Apache Kafka and the features it offers. One impressive thing about Kafka is the sheer number of uses Kafka software has. Many companies like TIBCO have begun to build streaming data pipelines and apps with enterprise-class support for Apache Kafka. TIBCO takes advantage of Kafka’s benefits and capabilities by incorporating it into their industry-leading TIBCO Messaging experience.

LinkedIn is one example of a company that relies on Apache Kafka to perform a number of functions across its platform, including activity tracking, message exchanges, and metric gathering. Kafka was actually originally developed for in-house stream processing at LinkedIn and then open-sourced. Now that it has been widely adopted externally, its use cases have continued to grow and expand far beyond what it was originally designed to be capable of for LinkedIn’s networking platform.

Walmart is another example of a major corporation that benefits from Apache Kafka. Walmart utilizes Kafka to power its real-time inventory system. Walmart’s event-streaming-based system is innovative and scalable and has helped a large business manage the details of its supply chain network in an effective and efficient way.

Apache Kafka is known throughout the technological world for its diversity and usefulness in data collection and management. Businesses ranging from networking platforms to car companies to casinos have all derived significant benefits from Kafka’s technology. Almost any company that deals in collecting and using data based on consumer events can find a use for Apache Kafka, especially through integration with external systems via Kafka Connect. Students and young professionals who aren’t already familiar with Kafka would be wise to learn how to utilize it as soon as they’re able to. Don’t wait until a job is requesting proficiency to learn your way around commonly used software like Kafka. Invest time and energy in mastering the tools you’ll need while you’re in school so you can get a quick start out of the gate as soon as you graduate.